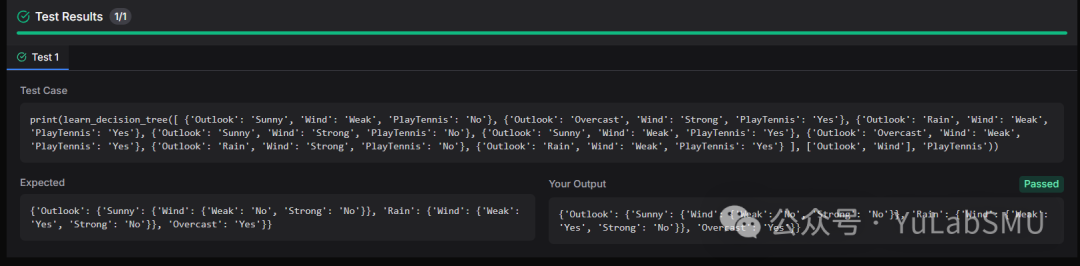

Write a Python function that implements the decision tree learning algorithm for classification. The function should use recursive binary splitting based on entropy and information gain to build a decision tree. It should take a list of examples (each example is a dict of attribute-value pairs) and a list of attribute names as input, and return a nested dictionary representing the decision tree.

Example:

Input:

examples = [

{'Outlook': 'Sunny', 'Temperature': 'Hot', 'Humidity': 'High', 'Wind': 'Weak', 'PlayTennis': 'No'},

{'Outlook': 'Sunny', 'Temperature': 'Hot', 'Humidity': 'High', 'Wind': 'Strong', 'PlayTennis': 'No'},

{'Outlook': 'Overcast', 'Temperature': 'Hot', 'Humidity': 'High', 'Wind': 'Weak', 'PlayTennis': 'Yes'},

{'Outlook': 'Rain', 'Temperature': 'Mild', 'Humidity': 'High', 'Wind': 'Weak', 'PlayTennis': 'Yes'}

],

attributes = ['Outlook', 'Temperature', 'Humidity', 'Wind']

Output:

{

'Outlook': {

'Sunny': {'Humidity': {'High': 'No', 'Normal': 'Yes'}},

'Overcast': 'Yes',

'Rain': {'Wind': {'Weak': 'Yes', 'Strong': 'No'}}

}

}

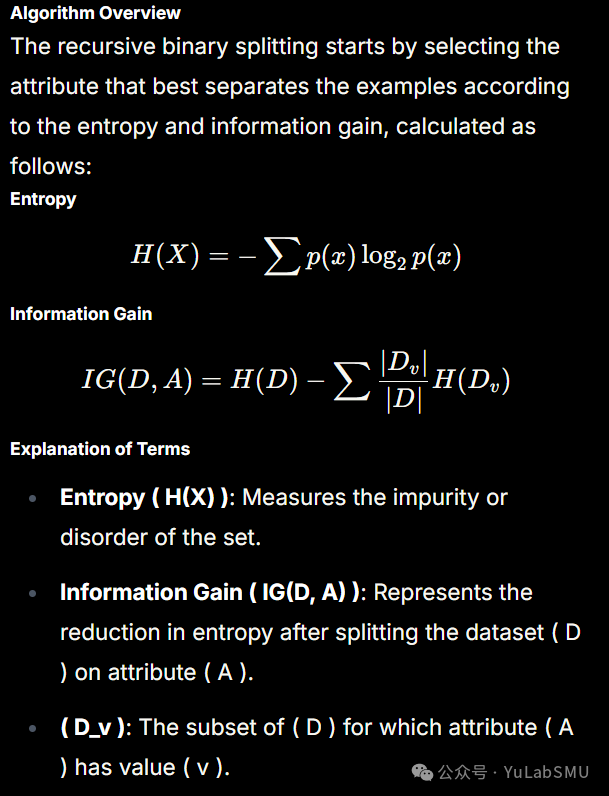

这道题要写一个决策树,决策树的构建采用递归分裂方法,核心步骤包括:

- 选择最佳分裂特征(基于信息增益、基尼系数等)

- 按特征划分数据集

- 递归构建子树,直到满足停止条件

首先是第一步,题目使用的是信息增益:

那么我们首先要定义函数来计算信息熵:

import math

def counter(labels):

counts = {}

for x in labels:

if x notin counts:

counts[x] = 0

counts[x] += 1

return counts

def entropy_item(x, total):

p = x / total

return p * math.log2(p)

def calculate_entropy(labels):

lab_cnt = counter(labels)

tot_cnt = len(labels)

entropy = -sum(entropy_item(count, tot_cnt) for count in lab_cnt.values())

return entropy

然后是定义函数来计算信息增益:

def get_attr_val(examples, attr):

return [example[attr] for example in examples]

def get_unique_vals(examples, attr):

return set(get_attr_val(examples, attr))

def get_subset(examples, attr, value):

return [example for example in examples if example[attr] == value]

def calculate_information_gain(examples, attr, target_attr):

targets = get_attr_val(examples, target_attr)

total_entropy = calculate_entropy(targets)

values = get_unique_vals(examples, attr)

attr_entropy = 0

for value in values:

subset = get_subset(examples, attr, value)

entropy = calculate_entropy(get_attr_val(subset, target_attr))

attr_entropy += (len(subset) / len(examples)) * entropy

return total_entropy - attr_entropy

有了信息增益函数,就可以通过它来得到最佳分裂特征,然后按照此特征切分数据,进行递归,重复这一过程,直到退出。

def majority(examples, target_attr):

x = counter(get_attr_val(examples, target_attr))

return max(x, key=x.get)

def learn_decision_tree(examples, attributes, target_attr):

ifnot examples:

return'No examples'

targets = get_unique_vals(examples, target_attr)

if len(targets) == 1:

return list(targets)[0]

ifnot attributes:

return majority(examples, target_attr)

gains = {attr: calculate_information_gain(examples, attr, target_attr) for attr in attributes}

best_attr = max(gains, key=gains.get)

tree = {best_attr: {}}

for val in get_unique_vals(examples, best_attr):

subset = get_subset(examples, best_attr, val)

new_attributes = attributes.copy()

new_attributes.remove(best_attr)

subtree = learn_decision_tree(subset, new_attributes, target_attr)

tree[best_attr][val] = subtree

return tree